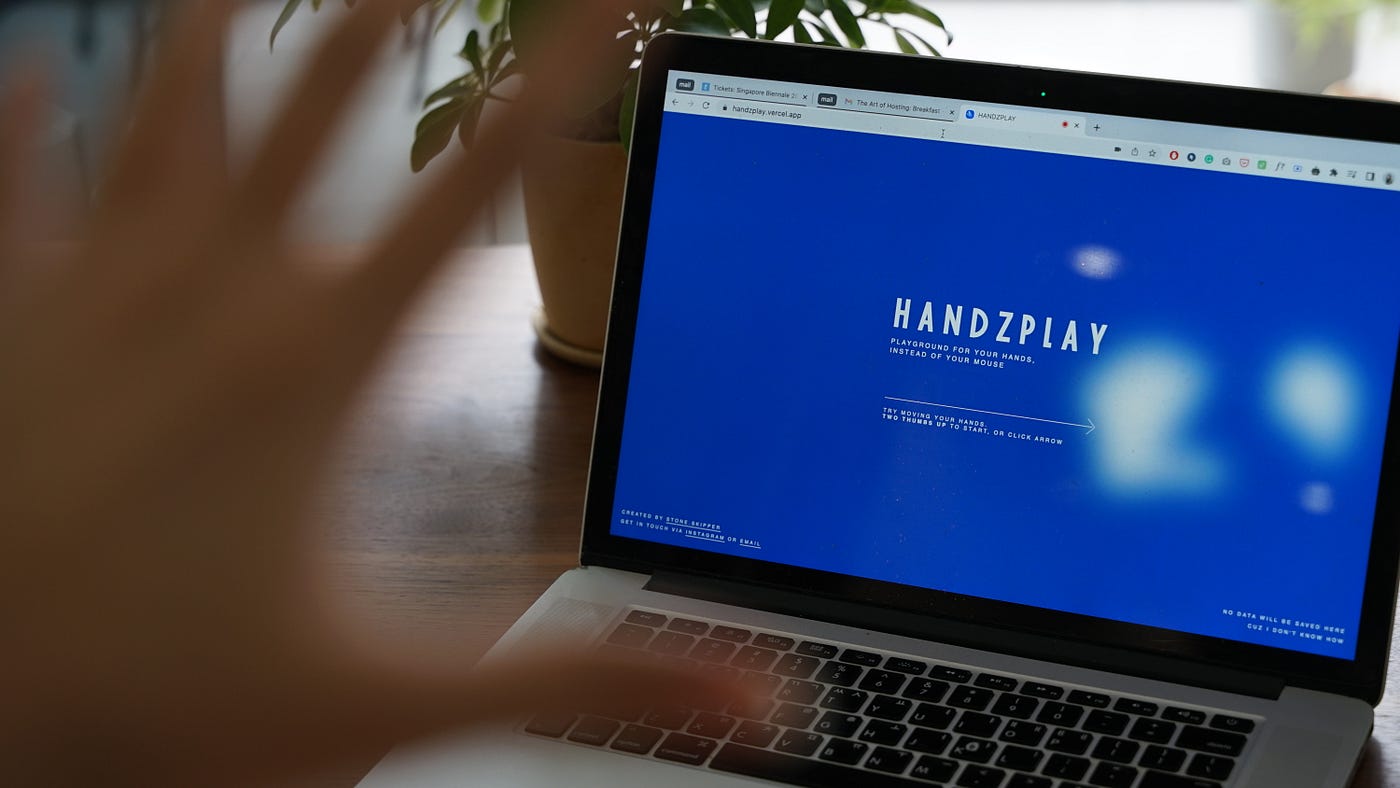

Building a hand gesture interaction design tool, Handzplay, and learnings from it

What is Handzplay?

I recently built and released a website where you can try and explore hand gesture interactions — Handzplay. It’s a self-initiated project for me to play around with hand gestures, but then I realized I wanted to go one step further, allowing others to play around with it and create interactions.

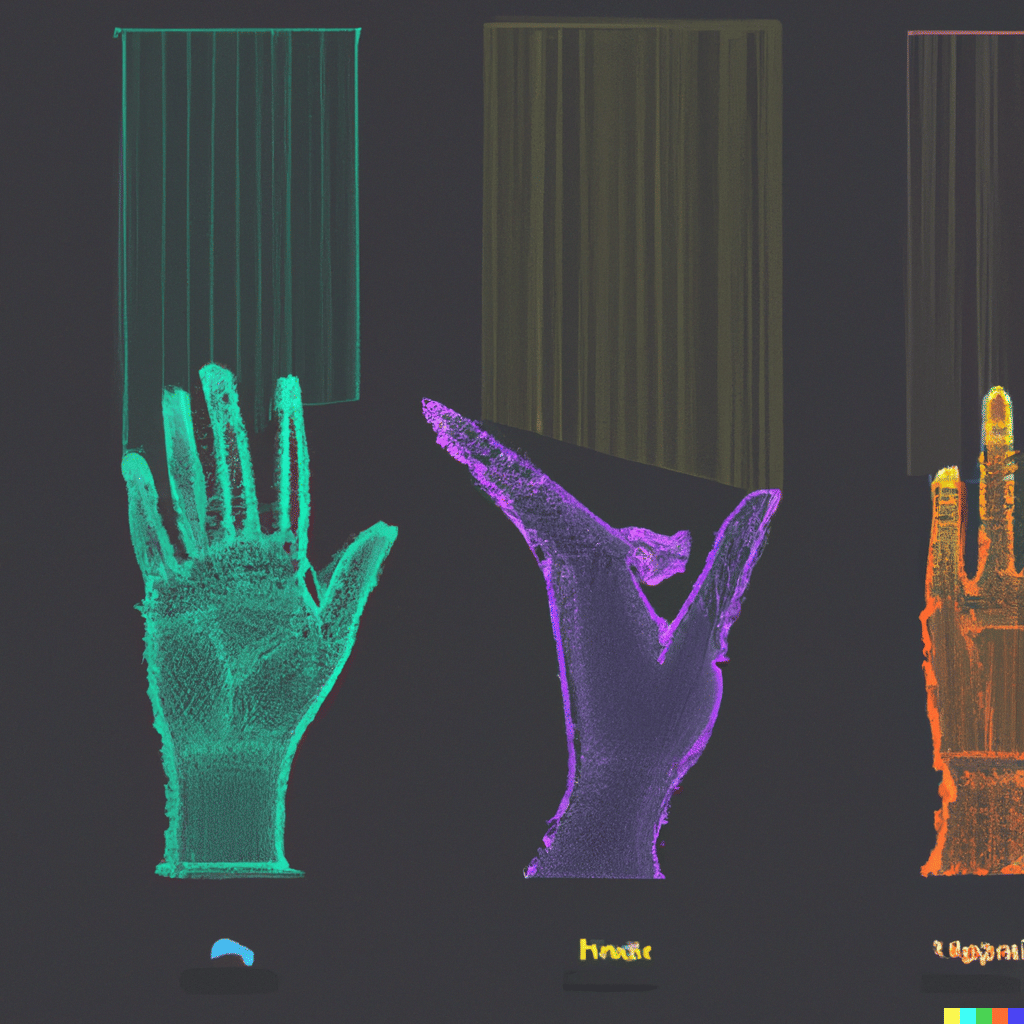

You might have already seen images like below— how ML is used to detect users’ hands, body movement, and facial expressions from photos or camera inputs. Though there are more advanced sensors and products that you can purchase, I was fascinated by the fact that anyone with a laptop or webcam can see detection working, from demos provided by Tensorflow.

As an interaction designer, I was naturally intrigued to build some interactions out of it. The biggest difference between the hand detection demo and the interaction demo would be giving control and feedback to users by using their hands. I wanted to design the demo of technology into a more experienceable tool.

So what can you do on Handzplay?

Handzplay aims to let everyone explore the potential of hand gesture interactions. With any device with a camera, you can use your fingers and hands to interact, and create new ‘rules’, defining what triggers which reactions.

Instead of defining a set of interactions by myself as I did with my previous project, I wanted to build a ‘tool’ that everyone can try and explore it.

You can simply start from some pre-configured settings from the template, or go with a blank page and create a new ‘rule’. This rule consists of two parts —a trigger, defining what kind of pose, action, or finger-finger distance will trigger something, and a reaction, choosing the type of reaction that will be prompted by the trigger.

Lesson learned

If you’ve already tried Handzplay by yourself, you might have some impressions, takeaways, and hunches about how to design for gesture interactions (Curious to hear your thoughts!). Same for me, as I’ve spent quite a time in front of my laptop trying all these hand postures and motions, here are some learnings and takeaways.

1. Gesture interaction might look cool, but it’s not for everything

We’ve imagined using hand gestures to control devices, but we might have overrated and romanticized it since we’ve always seen it in cool future sci-fi movies. When we apply this in our daily lives, there’re lots of cases where it’s not appropriate to use hand gestures.

Imagine you’re doing the following activities with gestures. You might already feel pain and soreness in your fingers and arms, just by imagining it.

Designing a user interface on Figma with hand gestures (Uh-oh)

Type lines of codes with a virtual keyboard (Seriously?)

Play a game for an hour (Let’s say it’s a typical game you play on PC, requiring you to move around in 3d space, attacking enemies, etc)

Skipping 30 songs in a row with air-swipe (You’ll probably have a cramp when you reach the song you wanted)

These might sound extreme, and we already know that we cannot use hand gestures for everything just because it looks cool. But thinking about what won’t work will eventually help us understand what hand gestures are good at.

2. The type of gestures can be almost infinite, but how can we categorize them?

When we say hand gestures as an interaction, everyone has a different image. Some may first think about over-the-air motion, like swiping in the air, and others may think of certain postures, like thumbs-up and victory.

But while I was playing around with Handpose, I especially enjoyed gestures like putting two fingers together, like index-thumb click, and middle-thumb click. These were interesting gestures as an interaction, as this sense of tactility that’s usually missing for hands-free interactions functions as ‘feedback’ in interaction.

For example, the movement of ‘air-swiping’ to the left itself doesn’t give any feedback to user, unless you see visual/audible changes on a screen. But if you touch your finger to finger, you can feel it without secondary feedback, because it’s your finger.

That’s why I’m providing three types of gestures as triggers for creating a rule on Handzplay. I still think those are not enough to capture all the possible hand gestures, but here’s the way I’m categorizing them at the moment.

‘Pose’ is certain postures that you’re already familiar with, like thumbs up, victory, and pointer, and those are highly affected by your cultural background.

‘Action’ is the movement of your hands, like swiping in the air. It can be linked to changing the status of elements on the screen, or playing audio, but it’s different from other types of gestures since it’s not related to the position of your hands at the moment.

And ‘Fingers’ is a type of interaction detected by ‘relation’ between fingers. For example, you can create a trigger like ‘if your left index finger and left thumb finger are within 10px to each other’, meaning those two fingers are touching each other.

3. What would it look like to onboard users to use hand gestures?

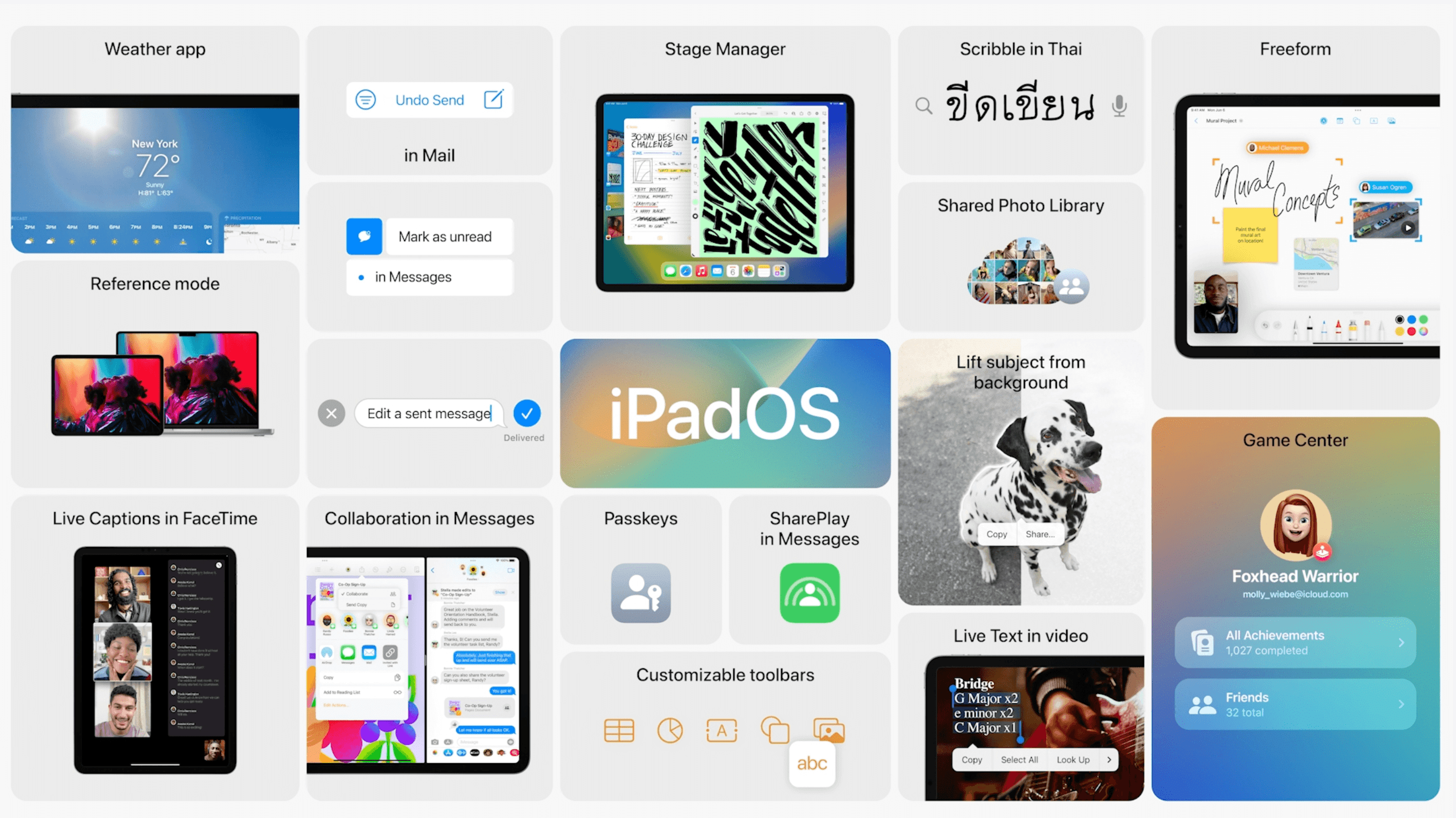

When there’s a new type of interaction introduced to a user, from peripherals like a mouse, keyboard, or game controller, to voice interaction, the time it takes for a user to get used to it varies. If we were to implement hand gestures as an interaction, what’d be the learning curve like for newcomers? For ease of imagination, let’s say we’re creating a smart TV app that you can interact with hand gestures, saving users from frustrations finding a remote.

It depends on how many gestures are necessary to interact with the app, but the first thing would be to let users know that their hands are a cursor and a TV remote. Visualizing their hands would help to communicate that. Similar to hover status with a mouse cursor, changes on the button when it’s ‘hovered’ with your hand would also help. Maybe that’d be the perfect time to tell users that you can use a certain pose to click it. And what’d be an adequate ‘click’ pose? Every user will have different mental images for this ‘click’ gesture, so it’s important to assign something natural for most people, and acceptable for the rest who didn’t come up with it at first.

As you start to assign more gestures for everything, it’ll get really confusing for users. It’d be essential to think about what are primary, mandatory gestures that users will need, and what are secondary, ‘nice-to-have’ gestures. And it’d be important to consider when / how we tell the user to learn these new poses.

4. Some sweet spots would be…

I’ve already alluded to this in other sections. Hand gesture is likely to be supplementary when it comes to small personal devices, like mobile, tablet, and PC, since it’s easier to use them with a touch display and mouse. But when users are leaning back in their chairs and couch, or slightly away from their devices to do some multi-tasking, like cooking, reading, drawing, and writing something, hand gestures can be a good option for simple controls.

While the use-case for these personal devices is quite a niche, it’d make more sense for a large display, like a TV or projector, or for multi-user intended devices, which often require additional accessories to interact.

In the next article — how I’ve built it

In the next article, I’ll start to unpack more about the process of building Handzplay. I hope you enjoyed the tool and the article here! You can keep posted via the following links.

> Website