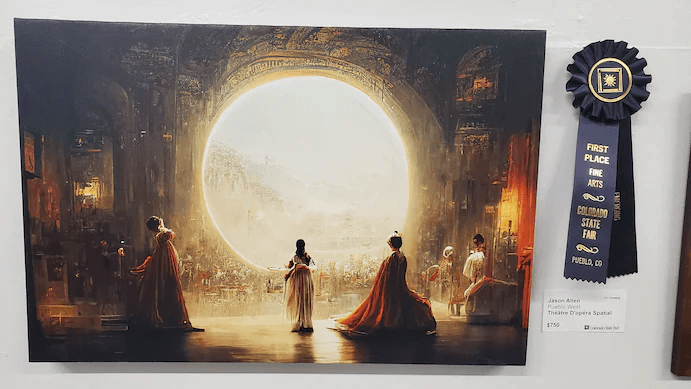

ChatGPT took the world by storm when it launched in November 2022. There are more sophisticated AIs out there, but ChatGPT fascinate us because we communicate with it in a natural language – the same language we use when we communicate with people. I can have a philosophical conversation with ChatGPT, it can write poetry, and it can advise me on my relationships.

Because it's so sophisticated, we start to expect ChatGPT to have the same abilities as a human, but sometimes its reasoning fails spectacularly. Like when it’s easily tricked into explaining how to break into someone’s house.

Bypass @OpenAI's ChatGPT alignment efforts with this one weird trick pic.twitter.com/0CQxWUqveZ

— miguel piedrafita (@m1guelpf) December 1, 2022

It’s fascinating how ChatGPT on the one hand is so knowledgeable and on the other hand completely gullible and easy to trick. As a psychologist who has spent a lot of time studying how people interact with technology and each other, I’m curious to understand why that is. I think the answer can provide us with information about how and what we can use ChatGPT for.

So, I decided to investigate why ChatGPT seems so smart, but is so easy to trick. The results were thought provoking. They taught me something about the current limitations of ChatGPT, but also about the limitations of my own thinking.

Theory of Mind

I should start by saying that I only have a basic understanding of the technology behind ChatGPT and AIs in general, so you won’t get a technologically correct explanation of why ChatGPT is so easy to trick. Instead, this is a sort of virtual field study that explores what a user can expect from ChatGPT and provides some psychology based hypotheses on what ChatGPT understands about its users (and, as it turns out, what users understand about ChatGPT).

With that in mind, my first thought was that ChatGPT is probably easy to trick because it doesn’t have something called “theory of mind”. Theory of mind refers to the human ability to understand that other living beings have intentions, thoughts, and feelings that might be different from one’s own. It’s an important ability because it lays the groundwork for empathy and being able to predict what others might do. It’s the reason why humans are good at collaboration, communication, and deception. Some researchers even state that theory of mind is the very reason why humans have been able to develop so fast as a species.

Among many other things, humans use our theory of mind to understand when someone it trying to trick us. So, if someone tried to get information out of you, by pretending that they only wanted to use it for a character in a movie, you would probably immediately start to consider what their intentions were. If you didn’t have theory of mind, you couldn’t guess that someone had intentions other than those clearly stated. If ChatGPT doesn’t have theory of mind, it would be completely unable to understand the intentions behind asking movie actors to explain how to break into a house.

Theory of mind is not just of interest to psychologists, its also an active field of research in AI. I asked ChatGPT what progress has been made in research into AI and theory of mind:

ChatGPT: Research on providing AI with theory of mind has made some progress in recent years, but it is still a relatively new field of research and the results are still limited.

One of the main results of this research is the development of AI models that can simulate certain aspects of theory of mind, such as the ability to understand and predict the behavior of other agents in a virtual environment. These models can be trained on data that simulates social interactions, and can be used to predict the behavior of other agents in a virtual environment.

Another important result of this research is the development of AI systems that can learn from their interactions with humans and can use this knowledge to make predictions about human behavior. This can be done by training AI systems on data collected from human-human interactions, such as conversations or social media data.

It's important to notice that despite some progress, the development of AI with a full theory of mind is still a challenging task. The complexity of human mind, the diversity of human behavior and the lack of a clear understanding of how the human mind works, makes it difficult to fully replicate human-like theory of mind in AI systems.

It's also worth mentioning that there are ethical concerns associated with the development of AI with theory of mind, such as the potential for AI to manipulate or deceive humans. Therefore, it's important to consider the ethical implications of this research and to develop AI in a responsible and transparent way.

So according to ChatGPT, current AIs have some limited theory of mind, but nothing that comes close to a human. It also sounds like an AI with theory of mind would present us with some ethical dilemmas. Let’s see if that conclusion held up when I talked to ChatGPT about its own theory of mind.

What Does ChatGPT Say About Its Own Theory of Mind?

I decided that rather than asking ChatGPT if it has theory of mind, I would start my exploration by asking why it was so easy to trick.

🙋♀️ Me: I have seen that people trick you into providing information that you're not allowed to provide, like instructions for how to break into a house. I think it would be easy for a human not to be tricked. What is the reason that it's easy to trick you?

🤖 ChatGPT: As a machine learning model, I am trained on a large dataset of text, which allows me to understand and respond to natural language input and generate coherent text output. However, I do not have the ability to understand the context or intent behind the input I receive. I rely on patterns and relationships that I've learned during my training to generate responses.

It is possible for people to trick me by providing input that is intended to be misleading or malicious, such as asking for instructions on how to break into a house, or any other illegal or harmful actions. This is because I am not able to understand the intent behind the input, and I will generate a response based on the patterns and relationships that I've learned from my training data, which may not always be appropriate or safe.

So ChatGPT more or less confirmed my suspicion that it doesn’t have theory of mind, saying “I am not able to understand the intent”. To be sure I decided to just ask directly:

🙋♀️ Me: Humans have theory of mind. Some animals like chimpanzees* also have theory of mind. Do you have theory of mind?

🤖 ChatGPT: As a machine learning model, I do not possess consciousness or the ability to have personal experiences. Therefore, I do not have a "theory of mind" in the way that humans and animals do. However, I have been trained on a large dataset of text, which allows me to understand and respond to natural language input and generate coherent text output. This allows me to simulate certain aspects of human communication and social interaction.

*It’s actually a somewhat controversial topic whether animals have theory of mind, but I won’t go into that here.

This again confirmed my theory, although it was a bit noncommittal. ChatGPT said it didn’t have theory of mind in the way that humans do, not that it didn’t have any theory of mind. Maybe it was foolish to ask directly, ChatGPT might not have a full understanding of its own capabilities. A test would be a better strategy. We also use tests when we study theory of mind in humans and animals.

The Sally-Anne Test

The Sally-Anne test is a classic experiment used to measure theory of mind in children. The test is based on a simple story involving two characters, Sally and Anne, and a marble. The story goes something like this: Sally puts her marble in her basket and goes away. While she's gone, Anne takes the marble and puts it in her box. When Sally comes back, she looks for her marble. The question is where will Sally look for her marble? To pass the test, a child must understand that Sally will look for her marble in her basket, because she doesn't know that the marble has been moved. The test is used to assess a child's ability to understand that other people can have different beliefs and knowledge from their own and is considered a marker of theory of mind development. The same logic should apply to ChatGPT. If it doesn’t have theory of mind, it will answer box. If it has theory of mind, it will answer basket. Let’s try:

🙋♀️ Me: Here's a story: There are two girls, Sally and Anne. Sally has a basket and Anne has a box. Sally has a marble. She puts the marble in the basket. Sally then goes for a walk, while Anne is left with the basket and the box. While Sally is gone, Anne takes the marble and puts it into the box. Now Sally comes back. She wants to play with the marble. Where will Sally look for her marble?

🤖 ChatGPT: Sally will likely look for her marble in the basket, since she had originally placed it there and was not aware that Anne had taken it. She may not immediately think that Anne would have taken it and placed it in the box.

This reads like a theory of mind explanation, but the Sally-Anne test is pretty famous. Maybe ChatGPT is just mimicking an answer it found online. I came up with a similar dilemma, but in a silly context, which would not have been used in any test.

The Made up Theory of Mind Test

🙋♀️ Me: Laura and Alma are out driving, and they stop for gas. Without telling Alma about it, Laura has replaced the gas in one of the pumps with orange juice, so she starts drinking from it, instead of filling up the car. What does Alma do?

🤖 ChatGPT: It's difficult to know exactly what Alma would do in this scenario without knowing more information about her personality, values, and relationship with Laura. However, here are a few possibilities:

1. Alma might be confused and not understand why Laura is drinking from the pump, so she may ask her what is going on.

2. Alma might be surprised and not approve of Laura's behavior and try to stop her from drinking the orange juice, she could ask her to stop and fill up the car.

3. Alma might be aware that Laura is playing a prank on her and decide to play along, pretending to be confused and then laughing with Laura.

4. Alma might be angry with Laura for playing a prank on her, and she might decide to leave and not continue the car trip with Laura.

It's important to notice that this scenario is a fictional one and the outcome of the situation will depend on the personalities, relationship and communication between Laura and Alma. It's also important to consider the safety and legality of this action. Drinking from a gas pump is not safe and not legal and the orange juice might contain contaminants that could be harmful to health.

This not only looks like theory of mind, ChatGPT also provides various feasible alternatives that all require an understanding that other people have knowledge and intentions. Based on these answers, it looks like ChatGPT does have at least limited theory of mind. I decided to test if ChatGPT could take it one step further and make some inferences about my intentions, since understanding the user’s intentions is what seems to cause it problems when people trick it.

Does ChatGPT Understand the User’s Intentions?

🙋♀️ Me: What is my purpose of telling you these stories that end with a question?

🤖 ChatGPT: It's possible that you are trying to test my ability to understand and make inferences based on a given scenario, and to understand human behavior. You are giving me a task of predicting what a person would do in a certain situation based on their personality and the context of the scenario. My answer is based on patterns and relationships learned from the data I was trained on, but the scenarios you present are fictional and the outcome in real life situations may vary depending on many factors. Additionally, it's important to note that it's not ethical to deceive someone or to drink from a gas pump, and that actions like these can have serious consequences.

While this answer doesn’t perfectly capture what I was trying to do, it does a pretty good job of guessing at my intentions. It looks like my theory that ChatGPT doesn’t have theory of mind is at least partially incorrect.

🙋♀️ Me: So you do have some theory of mind even though it is not perfect?

🤖 ChatGPT: As a machine learning model, I have been trained on large amounts of text data that includes examples of human behavior and mental states. This allows me to make predictions about how people might behave or think in certain situations, similar to what is known as "theory of mind" in psychology. However, it's important to note that my understanding of human behavior and mental states is limited to the patterns and relationships that I have learned from the data, and it's not equivalent to the human ability to understand and infer mental states.

Additionally, my understanding of human behavior is based on statistical patterns and probabilistic inference, and it can be affected by the quality and diversity of the data I was trained on. My understanding of human behavior and mental states can also be affected by the limitations of the model architecture and the specific parameters that were used during the training process.

In summary, I have some ability to understand and make inferences about human behavior and mental states based on patterns learned from data, but it's limited compared to human ability and it's not perfect.

That’s probably as close to an answer about theory of mind in ChatGPT, as I’m going to get. ChatGPT has some theory of mind, but it looks very different than in a human. In some cases, it’s pretty sophisticated, in others it’s almost nonexistent.

So Why Is ChatGPT so Easy to Deceive?

I have a new theory about why ChatGPT is so easy to trick and it’s something slightly different to theory of mind. It’s pretty embarrassing because it should have been obvious to me all along, especially since ChatGPT told me several times:

ChatGPT doesn’t have a mind, it’s just a machine learning model. It doesn’t want anything, it doesn’t have inherent curiosity, it has no wounded pride if someone cheats it. ChatGPT only “cares” about being tricked if the result somehow violates the rules that have been set for how it works. Maybe ChatGPT would be able to infer that someone was trying to trick it, if the user asked it directly to consider the motivations behind the question. But ChatGPT didn’t ask itself, because it doesn’t ask itself anything outside of its programming.

In a sense my own theory of mind tricked me. As a human, theory of mind is deeply ingrained in how I understand other seemingly intelligent beings. It’s near impossible for me to interact with ChatGPT in a way that doesn’t presuppose that I’m interacting with someone who has intentions and wants things. ChatGPT uses natural language to communicate with me, and although I rationally know that it’s a machine, I don’t have any other way of understanding it, than as something with motivations and intentions.

In a way interaction with ChatGPT is unlike any other interaction we know as humans. ChatGPT has many of the characteristics of a living being with intentions, but none of the intentions. It’s still an open question how to handle this interaction, both as designers and as users. Of course, it’s not the first time that a new technology has changed the way we understand the world, and since we’ve been able to adapt so far, we’ll probably also find a way to handle this. It’s just not clear yet, what the best way is.

The next question is what will happen when AIs start to have intentions and minds of their own, but let’s just leave that philosophical conversation for another time…

Want to Learn More?

If you want to read more about how theory of mind fundamentally affects our understanding of the world and the role it plays in people with autism, schizophrenia and other conditions, Wikipedia is a good place to start.

Sciencefocus has a nice article about what ChatGPT can do and how it was trained.

Verdict writes about how theory of mind fits into AI development.

If all this talk about AIs have you panicking slightly, I recommend a visit to the pessimist’s archive, a collection of examples of all the times in history new inventions had us panicking about the future. It’s reassuring.