Getting ready for a new era. Voice prototyping examples that go beyond a single person and screen.

The way we interact with the plethora of devices surrounding us and filling our lives is different from years ago. Simply put, those times that we interact with a single device only through touches, presses, and gestures are long gone.

Connectivity between and integrations among devices are a given. Think of Handoff among your Apple devices, turning off your Philips Hue lights through Siri because you’re in bed already and you put your phone on the nightstand that’s just a little too far away, seamlessly switching devices when listening to the new Discover Weekly playlist on Spotify, or the 170+ voice commands you can use while keeping your eyes on the road in that sweet Tesla Model S.

You got the gist, right?

New technologies drive us to interact differently with each other and subsequently differently with our products and devices. One of these technologies is voice. It’s foreseeable that voice will play a more essential role in your everyday lives. I grew up with the rise of the iPod, iPhone, and the world of e-commerce, but we have a whole new generation, Gen Z, that has a fairly different experience. They grow up ordering pizza and buying movie tickets using Alexa––so far they use and prefer voice-enabled personal assistants compared to older demographic cohorts.

It’s our job as creators and designers to be ready for a generation of users that interacts differently. We should design as well as prototype meaningful experiences tailored to this. As I believe that many don’t think about incorporating voice in their products (yet) or have a hard time turning voice-focused ideas into actual working prototypes, I would like to stand up for an era where voice prototyping beyond a single person and device becomes more democratized.

Hence, I’d like to share a set of voice prototype examples, made with ProtoPie, showcasing that we, designers, can absolutely prototype immersive, interactive experiences like these and don’t have to be bound by single user-screen experiences only.

In-Car Voice Control

I mentioned Tesla and its 170+ voice commands earlier. They are definitely not the only company; various others, e.g., Mercedes Benz, Continental, and Bosch have been making an effort on including voice as part of the driver experience.

In this example, we made an interactive prototype of the center screen display of a car that runs on a tablet. What would an in-car experience be without a steering wheel? So, we hooked up the tablet to a steering wheel. As you can see in the video, you can control the prototype with the buttons on the steering wheel to activate the voice assistant.

👉 Try out the in-car voice control prototype.

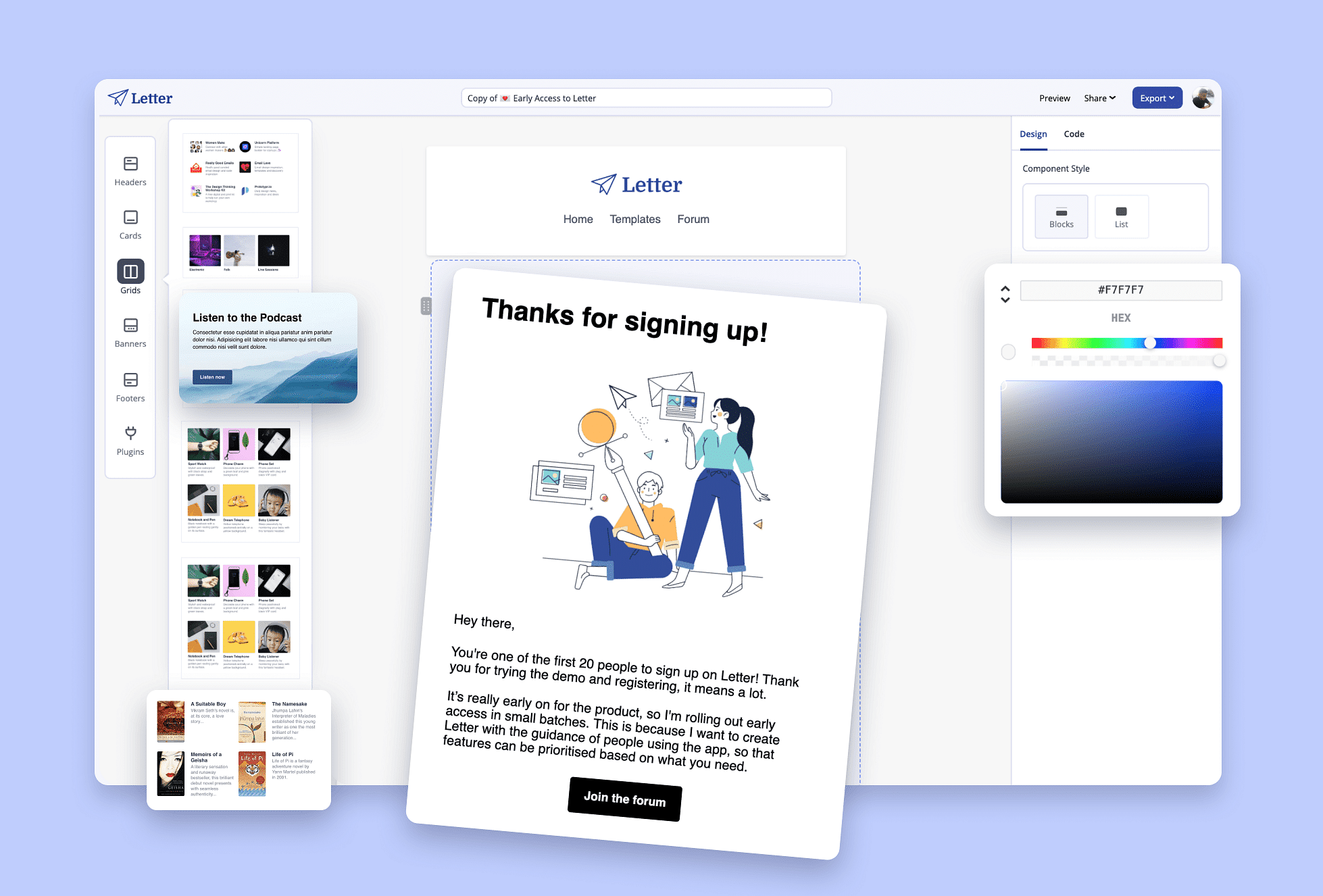

Letter

Smart TV Voice Search

Voice can make it a lot easier to access a certain feature more directly compared to having to navigate through a bunch of menus to get there, especially when it comes to smart TVs.

I bet you don’t know anyone who has a pleasant experience searching content on a TV with a remote. Voice search has been making this so much easier.

We have a smart tv prototype running on a computer that we connected to an actual TV. The remote control that you see isn’t connected to the TV directly but to the computer instead. As ProtoPie allows for keyboard input, we made sure that the computer recognizes the remote as a Bluetooth keyboard. And voilà, you got voice magic.

👉 Try out the smart TV voice search prototype.

Voice Translation App

I did mention “beyond a single person” before. A great product offering a multi-person voice experience is Google Translate that supports bi-lingual conversations.

Hence, the example that we came up with is a bilingual translation app prototype translating an English-Spanish conversation between my colleague Adri and myself over Zoom. See for yourself how that played out.

👉 Try out the voice translation app prototype.

Voice Typing with Google Docs

One of the accessibility features Google added to Google Docs years ago is Voice Typing which has been incredible for people who can’t or prefer to use voice rather than typing actual text.

The voice typing feature enhances the overall experience of how someone can produce text in their Google Doc. What you also can tell is that the voice typing feature needs visual feedback to let someone know the progress of what has been typed. This shows that we shouldn’t approach voice and visual as two separate things, but instead, design the experience as a whole.

👉 Try out the Google Docs voice typing prototype.

These are just 4 examples. As we live in a more connected world, there’s so much more out there and possible. All in all, it’s time for designers to care more about immersive experiences involving voice rather than voice and visual design as two separate things.

Adding voice to interactive prototypes (realistic ones, not your average click-through prototypes) used to be utopian as a designer. Not anymore. With ProtoPie, you can blend interaction and conversation design and prototype for a voice-ready future.